Most leaders believe AI is making them more effective. In many cases, the opposite is happening.

The leaders using AI the most are often the least prepared—producing more output, but doing less thinking. The issue isn’t the technology. It’s a poor leadership choice in how they use the technology.

Better outcomes don’t come from humans alone. They don’t come from machines alone. They come from human judgment deliberately paired with machine intelligence.

Yet that’s not what most organizations are doing today.

In the early days of our AI venture studio Nobody Studios, we saw this pattern clearly.

Production Without Understanding

Founders would arrive exceptionally well-prepared, at least on the surface. Pitch decks were polished, customer personas clearly defined, go-to-market strategies mapped out, and financial models built to investor-ready standards. They had everything one would hope for from disciplined, high-potential teams.

Then we spent time with them.

Within a couple of hours—asking questions, probing assumptions, exploring trade-offs—the substance began to unravel. Not because the ideas lacked merit, but because the thinking behind them was shallow. One, perhaps two layers deep at most. There was little genuine differentiation, no clear unfair advantage, and no evidence that difficult decisions had been made or trade-offs resolved.

All polish, no substance.

Like a display cake in a shop window, the work looked perfect on the outside but was hollow inside.

What we were reviewing was not preparation. It was production without understanding.

The founders had not done the hard thinking themselves. Instead, they had displaced it—onto the artifacts, onto their audience, onto us—expecting us to review it.

From that day forward, the speed or volume of production was never a measure of performance or success. That experience led us to ask a much sharper question: Does this work help you make better decisions?

Because that is the only question that matters.

The Rise of the Productivity Flex

What we observed in those founders is now widespread across organizations.

I refer to it as productivity flex: the use of AI to generate visible output without improving the quality of thinking behind it.

Productivity flex replaces thinking with output. It signals effort. It creates the appearance of progress. It rarely produces better outcomes.

The pattern is easy to spot. A deluge of output: multiple agents built in a weekend, extensive strategy documents produced in hours, workflows automated end-to-end. Yet what is missing is any real signal amid the noise—any indication that people are more prepared, more present, or more decisive under pressure.

Productivity flex optimizes for visibility, not effectiveness.

At scale, this is not benign. It produces organizations that appear highly active yet struggle to make coherent, timely decisions. Dashboards multiply, updates increase, and activity intensifies, but clarity diminishes. Production begins to outpace thinking, and decision quality suffers.

This is not confined to early-stage ventures. The same pattern is visible in executive teams, boardrooms, and large enterprises across industries.

95% of AI pilots fail, as MIT has shown. Not slow value, not delayed value—no measurable impact. 74% of companies are stuck in pilot purgatory, experimenting endlessly and scaling nothing. And while 90% of organizations are using AI, less than 40% see any EBIT impact—and for those that do, the gains are under 5%.

The real divide is no longer between organizations adopting AI and those that are not. It is between those improving the quality of their thinking and those simply increasing the volume of their output.

Most leaders think they are in the top-right. Most are operating in the bottom right.

What the Evidence Shows

If this were merely anecdotal, it would be easy to dismiss. It is not.

In 2025, Harvard researchers conducted a large-scale field experiment involving 776 professionals at Procter & Gamble, working on real innovation challenges.

The results were instructive.

Individuals using AI performed at a level comparable to teams operating without it. In effect, AI began to replicate many of the advantages traditionally associated with collaboration.

More significantly, when teams combined human judgment with AI, the likelihood of producing top-tier solutions nearly tripled.

This is not an incremental improvement. It is a different mode of performance.

AI enhances recall, expands the range of options considered, and reduces cognitive load. It enables individuals to process more information and explore more possibilities than would otherwise be feasible.

But it does not determine what matters. It does not make trade-offs. It does not assume responsibility for outcomes. Those remain inherently human tasks.

The advantage does not come from AI alone, nor from human capability in isolation. It comes from the deliberate integration of both.

And that advantage is compounded when you redesign how you use AI to capture, transcribe, synthesize and act. Once we made this discovery, we re-engineered how our entire idea lab in the studio operated—leading to better ideas, better businesses, and better judgment on where to invest, what to stop, and what to do differently.

When Judgment Disappears, the System Breaks Down

The consequences of misunderstanding this are already visible.

In 2025, Deloitte acknowledged that a report produced for the Australian government using generative AI contained fabricated citations and substantive errors. The firm ultimately refunded approximately AUD $440,000 and absorbed the reputational damage.

This was not a failure of the technology. It was a failure of judgment.

The output was accepted without sufficient scrutiny. Assumptions were not challenged. Accountability was not exercised.

The system did not fail to generate content; leadership failed to validate it.

This is the risk of machine-only leadership: an over-reliance on outputs that appear credible but have not been rigorously evaluated.

Equally problematic, though less visible, is the gradual erosion of a leader’s ability to distinguish between a well-reasoned decision and a well-articulated one.

Over time, the discipline of judgment weakens—both for individual leaders and for organizations—and the results of that erosion can be catastrophic.

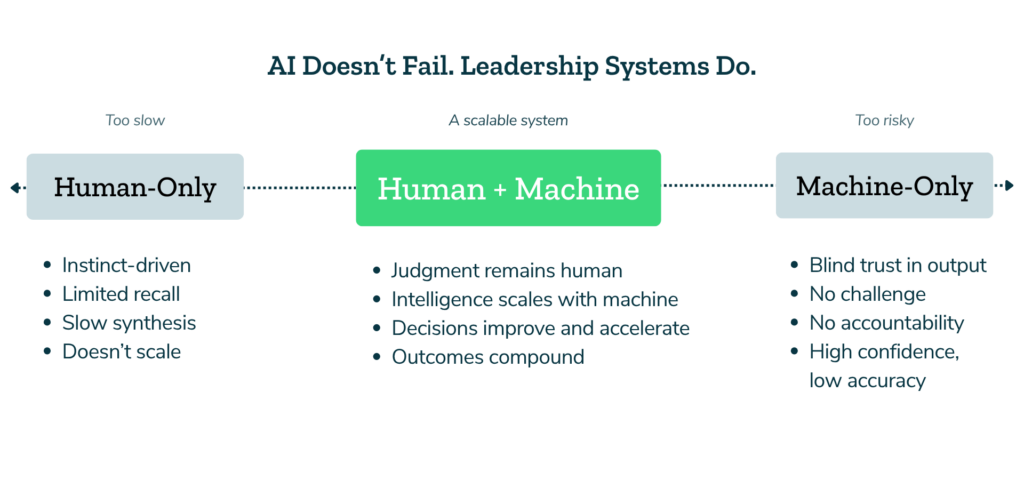

Two Failure Modes

In practice, most organizations oscillate between two ineffective extremes.

The first is human-only leadership, where decisions rely heavily on instinct, experience, and individual pattern recognition. These qualities remain valuable, but they no longer scale in an environment where others are augmenting their judgment with machine-supported insight.

Leaders operating in this mode will increasingly find themselves outpaced—not because they lack capability, but because they are working with a narrower field of information and slower synthesis.

The second is machine-only leadership, where outputs are accepted with minimal interrogation. In this mode, speed increases, but decision quality deteriorates. Errors compound, and the ability to critically evaluate information diminishes over time.

Neither approach is sufficient. AI simply exposes which leadership mode you are in.

Better outcomes don’t come from humans alone. They don’t come from machines alone. They come from human judgment deliberately paired with machine intelligence.

This is not a philosophical distinction. It is operational. It determines how decisions are made, how work is structured, and where accountability ultimately sits.

Therefore, the advantage is not AI. It is how you design for judgment.

This is not a tool choice. It is a system design choice.

Human-only leadership doesn’t scale. Machine-only leadership doesn’t hold. The advantage sits by getting the best of both, and it must be designed deliberately.

Redesigning How Work Happens

Once this became clear, the response within Nobody Studios—and as I worked with organizations such as American Airlines, Slack, Wells Fargo, HSBC, and Progyny—was not to introduce more tools, but to redesign how judgment flows through the work.

Four principles proved consistently effective.

First, context capture becomes foundational. Conversations, decisions, and emerging patterns are treated as assets rather than transient events. When context is preserved, thinking compounds.

Second, AI is used to challenge assumptions rather than confirm them. Its value lies not in reinforcing existing views, but in exposing gaps, contradictions, and overlooked risks.

Third, synthesis precedes action. Before decisions are made, inputs are integrated into a coherent view: what is known, what is assumed, and what would need to be true for the decision to hold.

Finally, decisions are made explicit and testable. If a decision cannot be clearly articulated—including its assumptions and trade-offs—it has not truly been made.

The result is not more output, but better decisions—made faster, with greater clarity, stronger context, and fewer reversals.

In Artificial Organizations, we define and measure this as decision velocity and decision advantage—how quickly you move, and how consistently your decisions lead to better outcomes.

This is how you move beyond productivity flex.

The Real Divide

The most significant shift underway is not technological, but behavioral.

Many leaders remain focused on increasing output—producing more documents, generating more analysis, and demonstrating visible activity. These signals are easy to measure and communicate.

However, the leaders who are pulling ahead are optimizing for something less visible but far more consequential: the quality of their thinking under pressure.

They make decisions more quickly, with greater clarity and fewer reversals. They enter critical conversations calmer, more prepared, and more present—not burdened by fragmented context or cognitive overload. They are able to articulate trade-offs clearly and commit to a course of action with confidence.

This is not a function of working harder. It is a function of working differently.

Where to Start

The shift does not require a wholesale transformation to begin.

A practical starting point is a single decision that matters.

Rather than using AI to generate more options or produce additional artifacts, use it to interrogate your thinking. Identify assumptions, surface what may be missing, and pressure test the preferred course of action.

Then decide—and take responsibility for that decision. This is the work that cannot be delegated.

Final Thought

AI will not do your thinking for you. But it will reveal, with increasing clarity, whether that thinking has been done.

That is the shift underway.

Not from human to machine, but from output to judgment.

The leaders who recognize this early will not simply move faster. They will make better decisions—and that advantage will compound and accelerate over time.

Those who do not will remain busy, visible, keep flexing, and increasingly fall behind.

Appreciate Maryam Aidini, John Cutler, Martin Eriksson, and Jonny Schneider for challenging the early drafts of this blog. Check out their latest work:

- Lean Experimentation in Action by Maryam Aidini

- Single Player Mode vs Multiplayer Mode by John Cutler

- The Decision Stack, by Martin Eriksson

- Lunastak, Strategy Development App by Jonny Schneider

FAQ

Q1. What is the biggest mistake leaders make with AI?

They start with tools instead of decisions. Without clarity on how they work, AI just increases output without improving results.

Q2. Why does more AI sometimes lead to worse decisions?

AI amplifies existing workflows. If those workflows are unclear, you get faster activity but weaker judgment.

Q3. How should leaders actually use AI?

As a thinking partner. It should help you synthesize information, challenge assumptions, and focus on better decisions.

Q4. Where should I start with AI in my work?

Start with one workflow, like meetings. Capture, transcribe, synthesize, and act—then build from there.

Q5. Can this work without rolling AI out across the whole company?

Yes. The most effective leaders start personally, and the impact spreads through how they work.

References

- Harvard Business School. AI and Human Collaboration Research

- Deloitte Faces Backlash Over AI Errors in Government Report

- CTSA Model: AI Decision-Making Framework

- Nobody Studios: Building 100 AI Companies Over Five Years

- American Airlines: Leadership and AI Transformation

- Slack: Leadership and Decision-Making in Fast-Changing Environments

- The GenAI Divide: State of AI in Business 2025.